Val simple_like = udf((s: String, p: String) => s. If for some reason Hive context is not an option you can use custom udf: import .functions.udf SQL language reference Functions Built-in functions Alphabetical list of built-in functions nvl function nvl function NovemApplies to: Databricks SQL Databricks Runtime Returns expr2 if expr1 is NULL, or expr1 otherwise. In Spark 1.5 it will require HiveContext. Or expr / selectExpr: df.selectExpr("a like CONCAT('%', b, '%')") SqlContext.sql("SELECT * FROM df WHERE a LIKE CONCAT('%', b, '%')") Parameters otherstr a SQL LIKE pattern See also Examples > df.filter(df.name.ilike('Ice')).collect()Row(age2, name'Alice'). Copyright. Returns a boolean Columnbased on a case insensitive match. Import sqlContext.implicits._ // Optional, just to be able to use toDF SQL ILIKE expression (case insensitive LIKE). Val sqlContext = new HiveContext(sc) // Make sure you use HiveContext Still you can use raw SQL: import .hive.HiveContext I hope you like this provides like method but as for now (Spark 1.6.0 / 2.0.0) it works only with string literals. In this article, you have learned how to use Pyspark SQL “ case when” and “ when otherwise” on Dataframe by leveraging example like checking with NUll/None, applying with multiple conditions using AND (&), OR (|) logical operators. Usage would be like when (condition).otherwise (default). otherwise(df.gender).alias("new_gender")) PySpark when () is SQL function, in order to use this first you should import and this returns a Column type, otherwise () is a function of Column, when otherwise () not used and none of the conditions met it assigns None (Null) value. To explain this I will use a new set of data to make it simple.ĭf5.withColumn(“new_column”, when((col(“code”) = “a”) | (col(“code”) = “d”), “A”) DBMSs below support ilike in SQL: Snowflake PostgreSQL CockroachDB Does this PR introduce any user-facing change No, it doesn't. To make migration from other popular DMBSs to Spark SQL easier. No need to use lower(colname) in where clauses. We often need to check with multiple conditions, below is an example of using PySpark When Otherwise with multiple conditions by using and (&) or (|) operators. on Add ilike and flike filter operators 3552 mattdowle completed in 3552 on MichaelChirico mentioned this issue on include PostgreSQL's ilike into data.table 2519 Closed KyleHaynes mentioned this issue on Scope for plike 3702 Closed Sign up for free to join this conversation on GitHub. ILIKE (ANY SOME ALL) (pattern+) Why are the changes needed To improve user experience with Spark SQL. Multiple Conditions using & and | operator

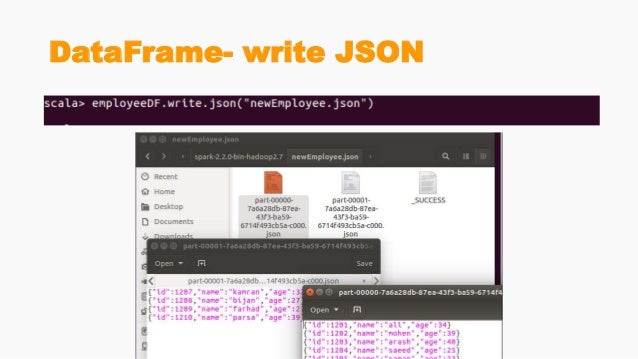

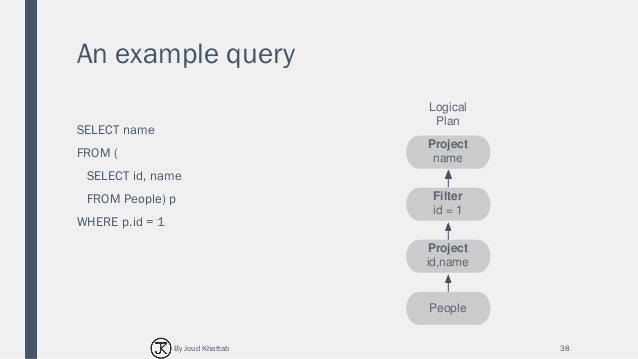

"ELSE gender END as new_gender from EMP").show()Ģ.3. Spark.sql("select name, CASE WHEN gender = 'M' THEN 'Male' " + You can also use Case When with SQL statement after creating a temporary view. "WHEN gender = 'F' THEN 'Female' WHEN gender IS NULL THEN ''" +ĭf4 = df.select(col("*"), expr("CASE WHEN gender = 'M' THEN 'Male' " + When() function take 2 parameters, first param takes a condition and second takes a literal value or Column, if condition evaluates to true then it returns a value from second param.įrom import expr, colĭf3 = df.withColumn("new_gender", expr("CASE WHEN gender = 'M' THEN 'Male' " + from import lower df.where (lower (col ('col1')).like ('string')).show () Share Improve this answer Follow answered at 13:12 yardsale8 920 8 15 Add a comment 5 Well. Usage would be like when(condition).otherwise(default). To replicate the case-insensitive ILIKE, you can use lower in conjunction with like. PySpark when() is SQL function, in order to use this first you should import and this returns a Column type, otherwise() is a function of Column, when otherwise() not used and none of the conditions met it assigns None (Null) value. Using w hen() o therwise() on PySpark DataFrame. You can use this function to filter the DataFrame rows by single or multiple conditions, to derive a new column, use it on when ().otherwise () expression e.t.c.

("Robert",None,400000),("Maria","F",500000),ĭf = spark.createDataFrame(data = data, schema = columns)ġ. In Spark & PySpark like () function is similar to SQL LIKE operator that is used to match based on wildcard characters (percentage, underscore) to filter the rows.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed